Why post-click testing matters for web2app growth

Most growth teams treat creative testing as an ad problem. They test hooks, formats, and copy across dozens of ad variations every week, shipping new creative concepts constantly to keep performance stable.

What gets far less attention is creative testing after the click—the funnel experience users see once they land on your website. In many web-to-app campaigns, multiple ad creatives built around different angles all lead into the same funnel that hasn’t changed since launch.

This article explores how creative testing works for web-to-app funnels, covering the post-click layers: welcome screen framing, quiz arc, pre-paywall warm-up, and offer design—and how to test those elements so results are actually attributable.

💡Web2App ad volume surged +254% on average in 2025, with creatives per brand reaching into the thousands among top performers. (FunnelFox State of web2app report 2026)

Ad creative production is accelerating fast. Funnel iteration is not keeping pace—and that gap is where conversion is being lost.

Funnel as a creative and conversion asset

A web2app funnel is not a neutral pipeline. Every screen makes a creative decision: what to say, what emotion to lead with, what to show before asking for a commitment. Those choices shape whether users complete the quiz, arrive at the paywall with enough trust to convert, and ultimately which plan they select.

Think of it this way: the funnel continues the conversation the ad started. When an ad leads with a specific angle—time efficiency, stress relief, career progression—the funnel either picks up that thread or drops it. Users who click a stress-relief ad and land on a generic quiz intro will feel the mismatch. They may not be able to name it. But it shows up in drop-off rates at the first screen and lower completion across the quiz.

Your funnel = extension of your ad. Web lets you continue the conversation started in your ad.

Source: LinkedIn

Keeping that continuity (and testing it systematically) produces the same kind of compounding improvement that ad creative testing delivers. Funnel testing is still relatively rare, which means the advantage from doing it well is currently larger than it will be in two years.

What post-click creative testing covers

Post-click optimization is sometimes used as a synonym for CRO in the narrow sense: button placement, color testing, headline variants on a single screen. Those tests have a place. But funnel creative testing is broader and should include narrative and structural decisions that determine whether the funnel earns enough trust to move users to the paywall.

In practice, that means running experiments across four layers:

- Messaging and framing on each screen—whether the ad angle is actually carried through the quiz

- The emotional entry point the funnel opens with, and how different framings affect quiz start rate and completion

- The structure and information sequencing—what appears when, and in what order

- The offer itself: how it’s framed, what defaults are set, what appears in the pre-paywall zone

All four layers have a measurable impact on revenue. Track ARPU as the primary metric across funnel experiments, with conversion rate as a secondary signal. A change that improves conversion rate while reducing average plan value may not be a genuine gain.

What elements to test in web2app funnels

1. Narrative alignment: matching the funnel to the ad angle

The core question in funnel testing: does the funnel reflect what the user was shown in the ad? Different ad angles bring users with different motivations. A funnel built around one motivation will underperform for users who arrived through a different one.

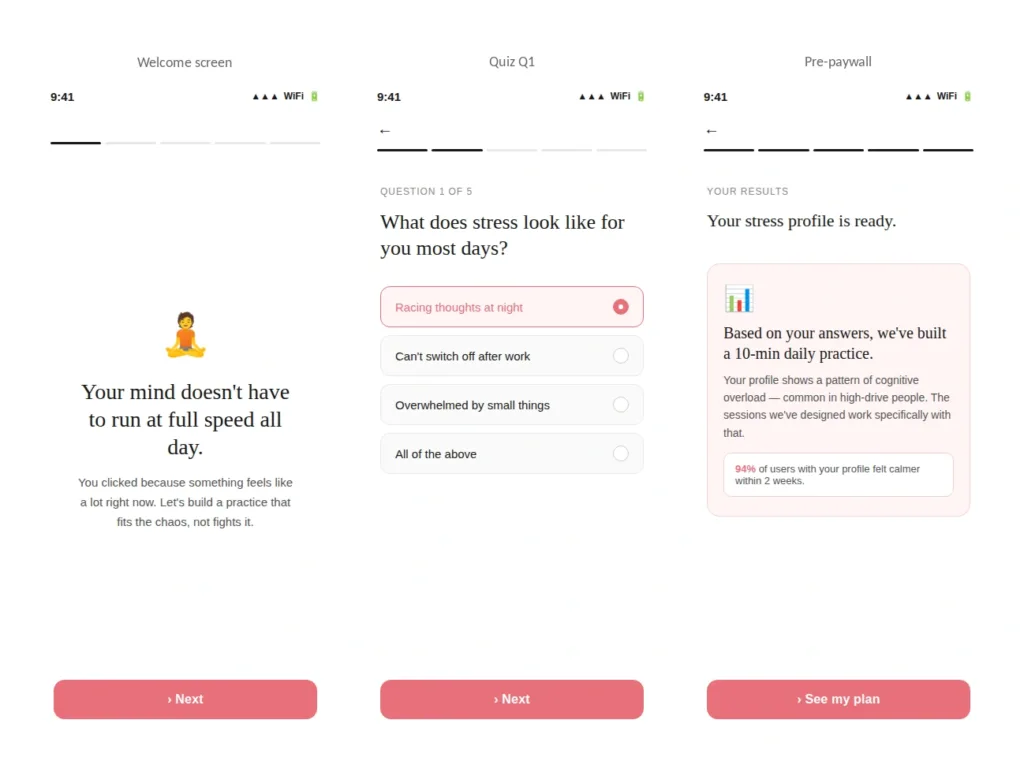

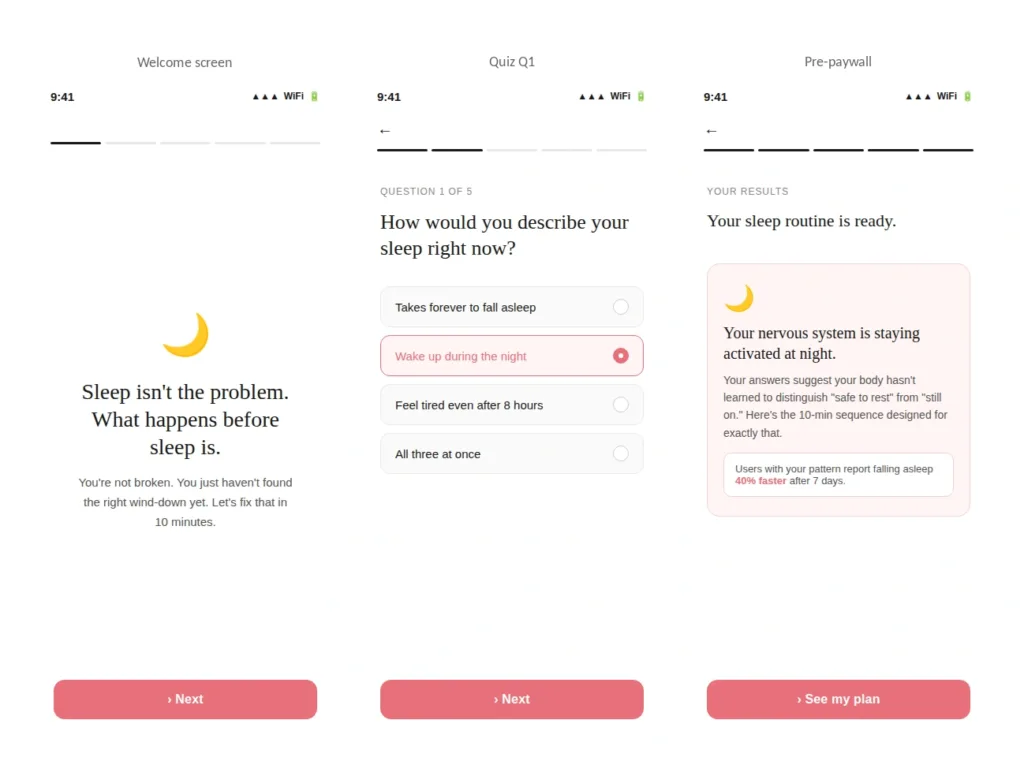

Consider a meditation app running two campaigns in parallel—one targeting users dealing with chronic stress, one targeting users who struggle with sleep. Both audiences are valuable, but they arrived with different needs and expectations. A funnel built for the stress audience will underperform for the sleep audience, and vice versa. Building two funnel versions that each reflect the right angle is not a large investment. The conversion difference is.

Below are two funnel versions built for those two audiences. Quiz structure and paywall are held constant—only the framing on each screen changes.

Ad angle A, Stress: “Finally quiet your mind. 10 minutes a day.”

Funnel example: meditation app, stress angle

Ad angle B, Sleep: “Wake up actually rested. Start tonight.”

Funnel example: meditation app, sleep angle

To isolate the narrative effect, keep the paywall identical across both versions and measure quiz completion and paywall conversion separately. When the funnel reflects why the user clicked, they engage with the questions more readily—and arrive at the paywall with higher intent.

💡 Best practice: Testing a full narrative arc as a single hypothesis. A common mistake in funnel testing is treating individual screens as the unit of experiment. A narrative angle spans several screens—the welcome, the opening questions, the feedback copy. Those elements work together. Testing one screen while leaving the others unchanged produces inconclusive results. The practical approach: build two full arc variants, changing all 3 to 5 screens that carry the angle. Test them as a single hypothesis. Hold the paywall constant. That gives you a clean read on whether the arc drives conversion, separate from any offer effect.

2. First-screen framing and its effect on quiz start rate

Over 50% of users drop off after the first screen, before answering a single question. (FunnelFox State of web2app report 2026) That makes the welcome screen one of the highest-leverage surfaces in the funnel. A small framing change here affects how many users enter the quiz at all—which cascades through every downstream metric.

The framing dimensions most worth testing on the welcome screen:

- Outcome framing vs. problem framing: “Get fit on your lunch break” vs. “Stop starting over every Monday”

- Identity-driven copy that names the user’s self-perception: “For people who’ve tried everything and are done starting over”

- Time commitment signal—whether naming the session length upfront helps or hurts quiz start rate

- Progress framing, such as showing the estimated quiz length (“2-minute quiz”) to reduce friction

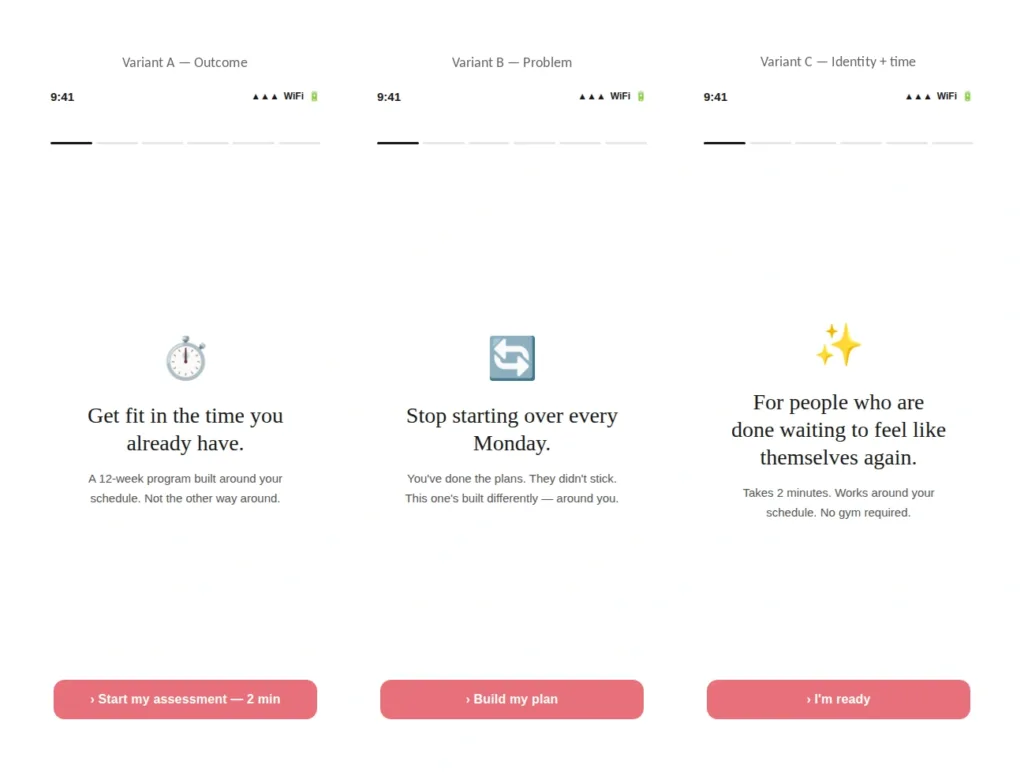

These can be tested as isolated changes on the first screen with everything else held constant. Welcome screen tests are fast to ship and fast to read—a good starting point for teams new to post-click optimization. Below are three variants of a fitness app welcome screen:

Funnel example: fitness app, three welcome screen variants

Track quiz start rate and quiz completion separately for each variant. Outcome-led framing tends to perform better for audiences from performance-focused ads. Identity-led framing tends to outperform for audiences from more emotionally resonant creatives. Your traffic data will tell you which applies.

3. Funnel structure and information sequencing

Beyond what the funnel says, how information is structured and sequenced has a significant effect on completion rates. The main variables to test:

- Quiz funnel vs. advertorial: a quiz builds personalization and investment; an advertorial goes deeper on a single angle before making any ask

- Short quiz vs. long quiz: audiences from high-commitment ads, for example, a long-form video, a detailed testimonial, will often tolerate more questions than audiences who clicked a short direct-response creative

- Information sequencing: when social proof appears, when the product outcome is described, how long before the user sees pricing

The right structure depends on where your audience came from and what intent they arrived with. Test structural variables separately, without changing the paywall at the same time. Otherwise you won’t be able to attribute the result.

4. The pre-paywall zone

The screens between the last quiz question and the paywall are often treated as a transition rather than a creative layer. In practice, this zone determines the state of mind a user arrives at the paywall with—and it has a measurable effect on conversion.

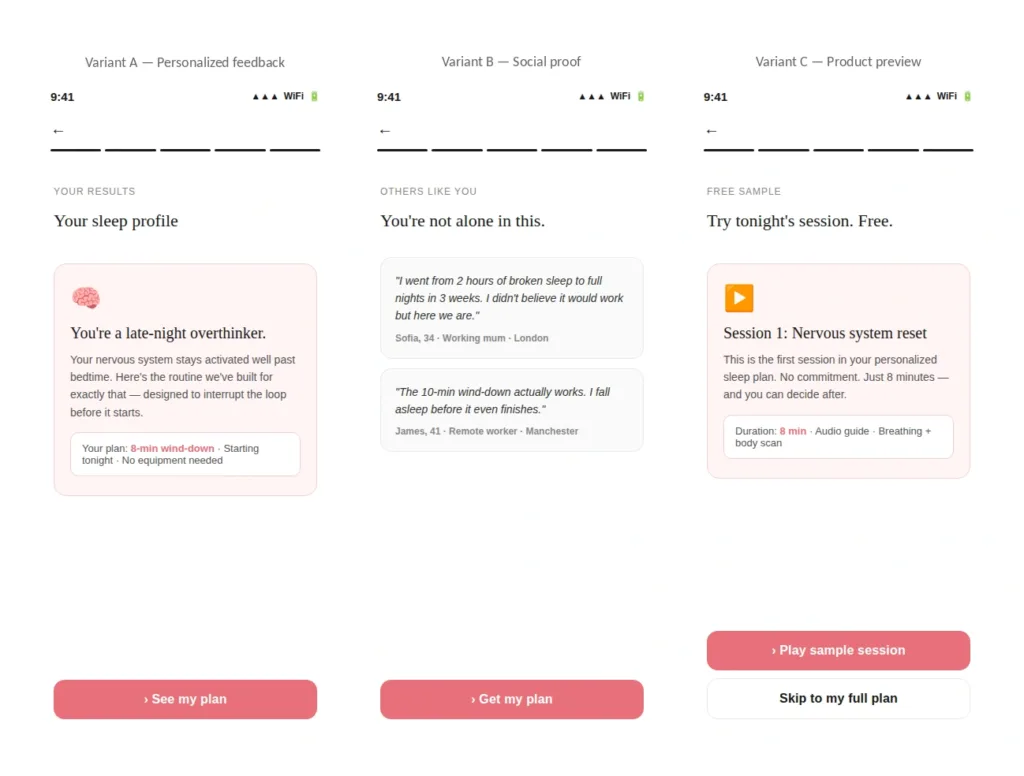

The main pre-paywall variants to test:

- A personalized feedback screen based on quiz answers, vs. a generic results summary

- Social proof from users with a similar profile, shown before pricing

- A product preview—a sample session, a sample lesson, a feature walkthrough—that reduces the perceived risk of paying before experiencing the product

- Expert credibility signals alongside or instead of user testimonials

Funnel example: sleep & wellness app, pre-paywall variants

Personalized feedback tends to convert better for users who engaged emotionally in the quiz. Social proof works better for users who need external validation before committing. Product previews are most effective for skeptical users who want to experience the product first.

Test each variant separately and measure paywall click-through rate and ARPU together—a variant that increases trial starts but reduces average plan value is not a clear improvement.

5. Email collection placement and framing

Where the email capture sits in the funnel—and how it’s framed—affects both opt-in rate and revenue recovery. Users who reach the paywall but don’t convert can be re-engaged through CRM and retargeting.

Mid-funnel email collection can recover 10–20% of that revenue when followed up correctly.

The main things to test:

- Placement: before the quiz, after the quiz, or after the paywall has been shown

- Request framing: “Save your personalized plan” vs. “Get your results by email” vs. no reason given

In longer funnels, users have invested enough time that they’re more willing to share an email by the end of the quiz. In shorter funnels, an email gate before the quiz may add more friction than it’s worth. Measure opt-in rate alongside downstream revenue recovery—not just collection volume.

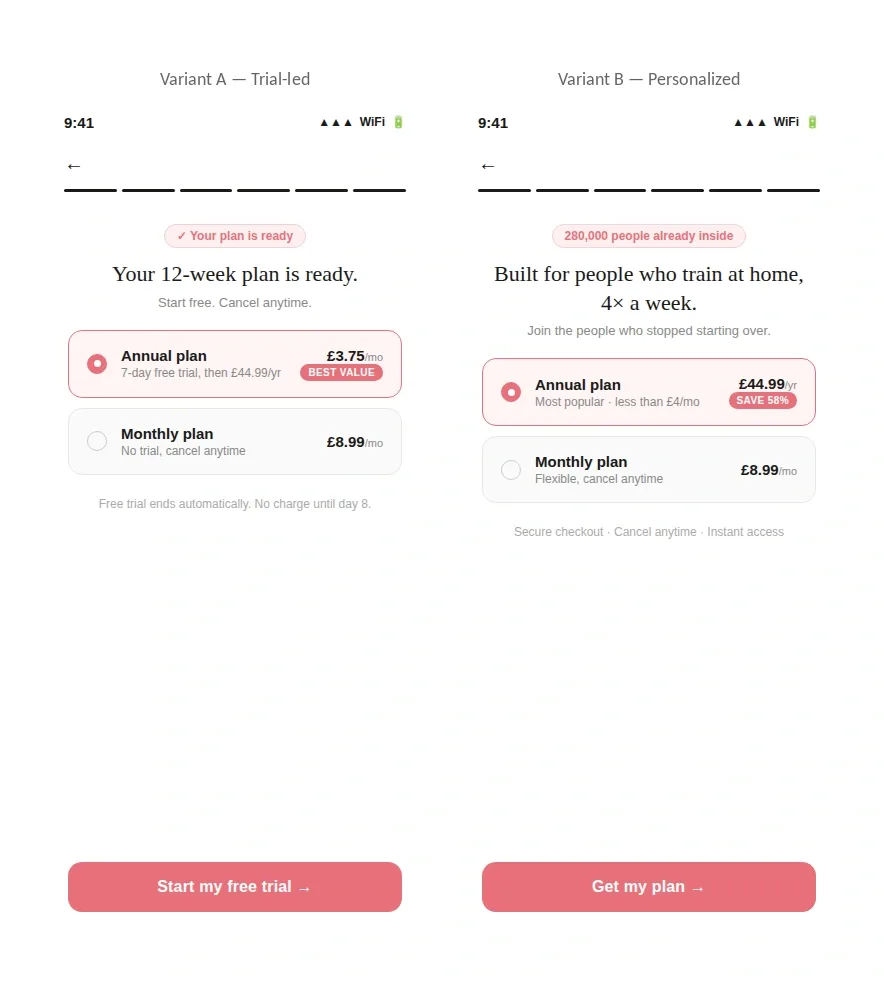

6. Offer structure at the paywall

How the offer is framed and structured, not just which plans are available, has a meaningful effect on conversion rate and average plan value.

The main dimensions to test:

- Trial vs. intro price: “Try free for 7 days” vs. “$1.99 for the first month”

- Pricing presentation: daily equivalent (“Less than a coffee a day”) vs. monthly or annual total

- Anchoring: showing the annual plan before the monthly plan to shift the perceived reference point

- Personalized headline copy based on quiz answers, vs. a generic plan description

- Default plan selection: which plan is highlighted when the paywall loads

Funnel example: fitness app, paywall variants

Trial-led offers reduce commitment anxiety for first-time buyers, which improves top-of-funnel conversion. Personalized framing tends to perform better for users from community- or transformation-oriented ads, as the headline reflects the identity they invested in during the quiz.

How ad creative testing and funnel testing work together

Ad creative testing and funnel testing are most valuable when they work as a connected loop rather than separate workstreams. Winning ad angles generate hypotheses for funnel experiments.

If a time-scarcity angle outperforms a generic one in ad testing, the next step is to build a funnel that reflects that angle and see whether it converts better for users coming through that specific creative.

The loop also runs the other way. Quiz responses—specifically which answers correlate with higher conversion—can be passed back to Meta or TikTok to help the algorithm find more users who match high-converting profiles.

The funnel generates targeting intelligence, not just conversion data. The questions you include in a quiz matter beyond the onboarding experience itself.

Build a funnel library like you build your creative library. Top web2app advertisers run dozens of funnels simultaneously—each tailored to a specific messaging angle, some even tracked on a separate data set.

Source: LinkedIn

The combination to build toward: ad narrative × funnel narrative × offer. Even a modest matrix—two ad angles, two funnel versions, two paywall structures—creates a meaningful testing surface. Teams that treat it as something to be explored systematically generate the most consistent revenue growth from web2app channels.

💡 FunnelFox data shows a strong correlation between the number of funnel versions and revenue. Top-quartile performers run 100+ funnel iterations. (FunnelFox State of web2app report 2026)

How leading web2app teams structure funnel experiments

Teams that approach funnel testing well tend to share a few habits. They build funnel variants to match distinct ad angles rather than routing all traffic into one flow. They cross-reference quiz answer patterns with conversion rates to find underperforming segments, using those findings to surface new audience opportunities, not just optimize for the existing one. And they iterate on narrative and offer structure in parallel rather than sequentially, which shortens the feedback loop between hypothesis and result.

Web converts ~3% after a longer journey (quiz → personalization → paywall) while in-app converts faster but hits an LTV ceiling. Web funnels have more levers to test and optimize—but also more ways to lose a user.

When to run an A/B test vs. launch a new funnel

The choice depends on two things: traffic volume and the scope of the change.

An A/B test makes sense when you have enough traffic to reach significance in a reasonable timeframe and when the hypothesis targets your current audience with a specific change—a different quiz arc, a revised pre-paywall screen, a new offer format. To detect a 20% effect on a 1% conversion rate, you need roughly 40,000 users per variant. At lower volumes, waiting for significance is often impractical.

A new funnel works better when traffic is limited, when you’re targeting a different audience segment, or when the change is big enough that running it as a variant would feel disjointed. Testing a new audience (different age group, geography, or use case) is cleaner with a dedicated funnel and campaign. The traffic stays uncontaminated and the results are easier to attribute.

Using funnel data to find new audience segments

One underused benefit of funnel testing: it surfaces audience segments teams weren’t aware of.

By looking at quiz answer patterns alongside conversion rates, you can spot clusters of users who respond differently from the majority and convert at a different rate. Those clusters often represent motivations or use cases the existing funnel doesn’t speak to.

A language-learning app, for example, might find that a portion of users consistently picks travel as their primary motivation—while the entire funnel and ad ecosystem is built around professional development. That segment may convert at a lower rate not because the product is a poor fit, but because nothing in the funnel acknowledges why they’re there. Adding travel-focused questions, adjusting the feedback screen, and testing a travel-oriented ad angle can improve conversion for that group and show whether it’s large enough to build a dedicated flow around.

The sources for finding these gaps are consistent: competitor funnels and creatives, app store reviews, in-app behavior data. A single audience often has several distinct motivations. Funnel data helps you measure which ones are large enough to act on.

How to scale creative testing in web-to-app funnels

The main constraint on funnel testing has historically been build time. When each new funnel variant requires developer involvement and a multi-week production cycle, most hypotheses never get tested. The ideas exist. The bottleneck is execution.

FunnelFox can help remove that constraint. The platform lets growth teams build and launch full web2app funnels—quiz-based onboarding, paywalls, checkout, upsell flows—without writing code.

A/B tests include full statistical reporting: conversion rate, ARPU, confidence intervals, p-values, and observed power.

Messaging, flow structure, and offer design can be iterated independently. A new quiz arc can be tested without touching the paywall, and a new offer format can be tested without changing the quiz. The goal is to bring funnel iteration in line with the speed growth teams already apply to ad creative.

Post-click conversion: next growth lever

Ad creative testing will remain an important part of web2app growth. But for teams that have already invested in rigorous ad testing, the funnel is often where the next gains are. It’s less commonly tested, and small changes in framing, structure, and offer design compound across every user who arrives from every ad.

The starting point is simple: one ad angle, one matching funnel, measured separately from the rest of your traffic. Each layer—welcome screen, quiz arc, pre-paywall zone, paywall offer—is a testable variable with a measurable effect on revenue.

Teams that bring the same rigor to funnel testing as they do to ad creative build a compounding advantage in post-click conversion that takes time to catch up to.

Related reading: Creative fatigue: how to detect post-click burnou